There Is No Backup Now

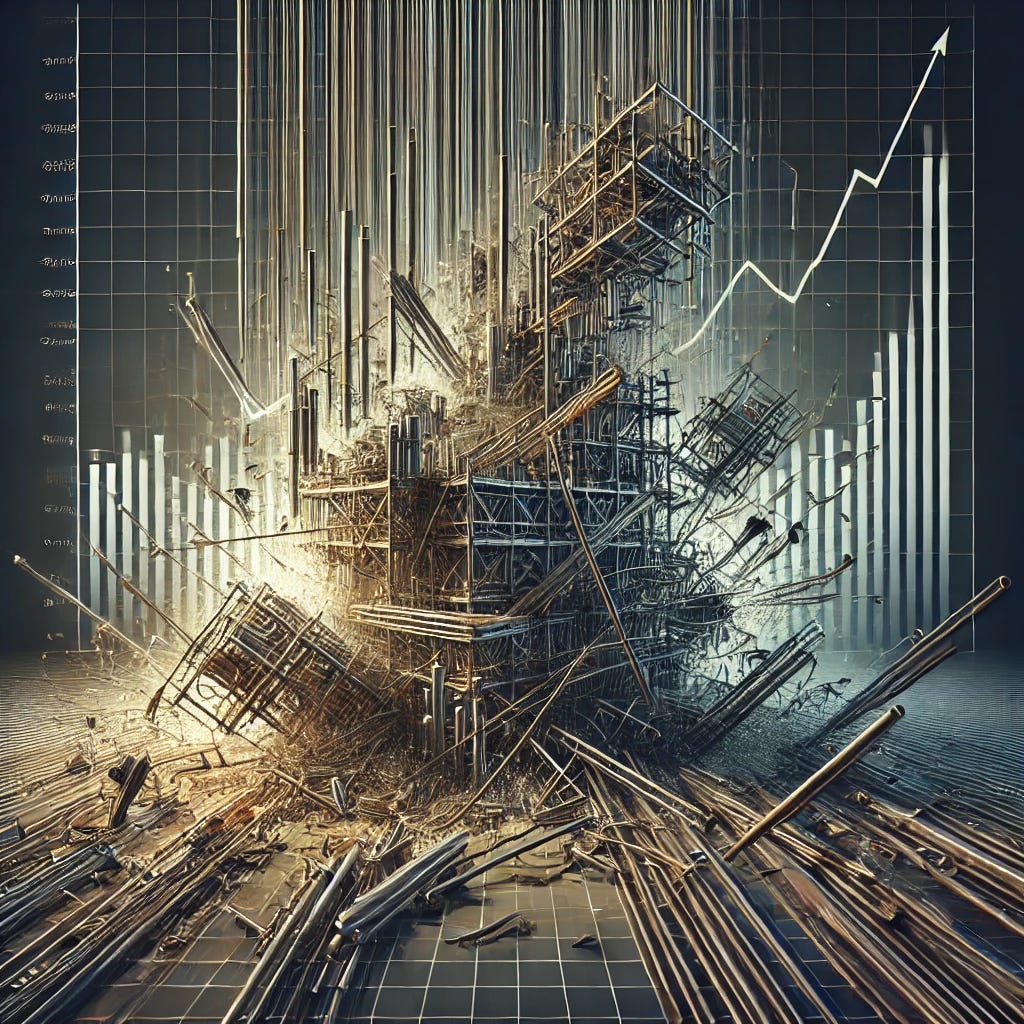

How AI Optimized Safety Away

Everything used to have backups.

Extra staff.

Spare capacity.

Manual overrides.

Second systems that rarely got used.

They looked inefficient.

They were expensive.

They saved us when things broke.

AI is quietly removing those layers. Not out of malice. Out of optimization. And as redundancy disappears, systems get faster, cheaper, and far more fragile.

Redundancy Was the Hidden Safety Net

Redundancy was never elegant.

It was practical.

Airplanes have multiple control systems.

Hospitals have overlapping roles.

Markets had human judgment layered on top of automation.

Engineers have long understood that complex systems survive shocks not by being perfect, but by having slack and duplication (Perrow 1999).

Redundancy absorbed error.

It bought time.

AI Treats Redundancy as Waste

AI systems are built to optimize.

They identify overlap.

They remove duplication.

They compress processes into the minimum needed to function.

From a performance perspective, this is impressive. Studies show AI driven optimization can significantly reduce cost and increase throughput across logistics, manufacturing, and services (McKinsey 2023).

But redundancy looks like inefficiency to an optimizer.

So it gets trimmed.

Speed Improves. Resilience Drops.

When redundancy disappears, everything works better until it doesn’t.

There is no second team to check the output.

No backup process to fall back on.

No slack when demand spikes or assumptions fail.

Researchers studying high reliability systems warn that efficiency gains often come at the cost of resilience, especially when human buffers are removed (Weick and Sutcliffe 2015).

The system runs clean.

Right up to the edge.

Where This Is Showing Up Now

Customer support centers operate with minimal human escalation.

Airlines rely on tightly coupled scheduling systems.

Supply chains run with just in time precision.

Cloud services consolidate control into fewer layers.

When something breaks, recovery takes longer because there is nowhere else to go.

This pattern was visible during recent tech outages and logistics disruptions. Systems optimized for normal conditions struggled under stress (OECD 2022).

Why This Feels Fine at First

Most of the time, nothing goes wrong.

AI handles the load.

Processes run smoothly.

Costs stay low.

That success reinforces the model. Redundancy feels unnecessary. People forget why it was there in the first place.

Psychologists call this normalcy bias. The belief that tomorrow will look like yesterday, even in complex systems (Taleb 2012).

Humans Used to Be the Redundancy

People filled gaps.

They noticed anomalies.

They improvised.

As AI replaces those roles, human judgment is no longer layered in by default. It becomes an exception.

Researchers note that when humans are removed from operational loops, systems lose the ability to adapt creatively to unexpected conditions (Bainbridge 1983).

Efficiency rises.

Flexibility falls.

The Tradeoff No One Debated

This shift happened quietly.

No vote.

No public discussion.

No explicit choice.

Organizations adopted AI to compete. Redundancy disappeared as a side effect.

The result is not a broken world.

It is a thinner one.

What Happens During Real Stress

Crises reveal structure.

When demand spikes.

When inputs fail.

When assumptions collapse.

Systems without redundancy cannot bend. They snap. Recovery depends on rebuilding what was removed.

Resilience engineers argue that future systems must deliberately reintroduce redundancy in new forms, even if it looks inefficient on paper (Hollnagel et al. 2013).

AI is not making systems worse.

It is making them lean.

But lean systems survive only under expected conditions. Redundancy was the price we paid for surprise.

As AI optimizes away overlap, we gain speed and lose margin. That tradeoff stays invisible until the moment it matters most.

The danger is not collapse.

It is fragility disguised as progress.

References

Bainbridge, L. (1983). Ironies of automation. Automatica, 19(6), 775–779.

Hollnagel, E., Woods, D. D., & Leveson, N. (2013). Resilience Engineering. Ashgate.

McKinsey & Company. (2023). The State of AI in Operations.

OECD. (2022). Global Supply Chain Resilience. OECD Publishing.

Perrow, C. (1999). Normal Accidents. Princeton University Press.

Taleb, N. N. (2012). Antifragile. Random House.

Weick, K. E., & Sutcliffe, K. M. (2015). Managing the Unexpected. Wiley.

I can claim it so, it's fake.

I can claim it so, it's fake.