The Parallax Instinct

Could Depth Perception Be a Primitive Form of Spatial Computation Across Evolutionary Scales?

Abstract

Depth perception has long been studied as a functional outcome of binocular vision, aiding navigation and survival. But what if parallax, the relative displacement of objects due to observer movement, is not merely a visual aid, but the evolutionary root of spatial computation itself? This article proposes the Parallax Instinct Hypothesis, arguing that depth resolution via parallax served as an ancestral computational scaffold for more abstract spatial reasoning, from neural mapping in insects to higher-order spatial logic in humans. We explore how early life may have used parallax-like sensorimotor cues before vision evolved, examine modern animal studies, and consider whether parallax could be a universal design principle in artificial systems, including robotics and neural networks.

1. Introduction

When a human closes one eye, the sense of depth diminishes. Yet even with one eye, many species, reptiles, birds, insects, can infer depth. This has puzzled biologists: how can a single eye, or even a non-visual system, encode spatial layout? A growing body of research suggests that movement-based parallax, the shift in visual data due to motion, provides critical information for inferring depth.

But what if this dynamic encoding of space isn't just a workaround for binocular absence? What if parallax was the first form of spatial computation, predating vision, and now deeply embedded in how organisms, and possibly minds, model dimensionality?

2. Parallax as Evolutionary Computation

Long before animals had image-forming eyes, simple organisms responded to gradients of light, temperature, or chemical concentration. Some early Cambrian creatures may have used primitive directional sensors that, through movement, induced spatial disparity in stimuli over time, an ancestral parallax, not based on light but on time-shifted gradients.

This sensorimotor discrepancy required organisms to model spatial relationships indirectly, a behavior that mimics computational primitives. For example, planarians adjust their movement paths based on chemical gradient discrepancies between their two auricles. Jumping spiders with multiple fixed-focus eyes perform depth judgments by scanning and updating internal representations, a form of spatial logic independent of binocular disparity. Electric fish compute the location of prey using time delays between electric field distortions, functionally similar to parallax.

Thus, the parallax instinct may be the proto-algorithm by which life began thinking in space.

3. Neuroscience of Disparity and Motion

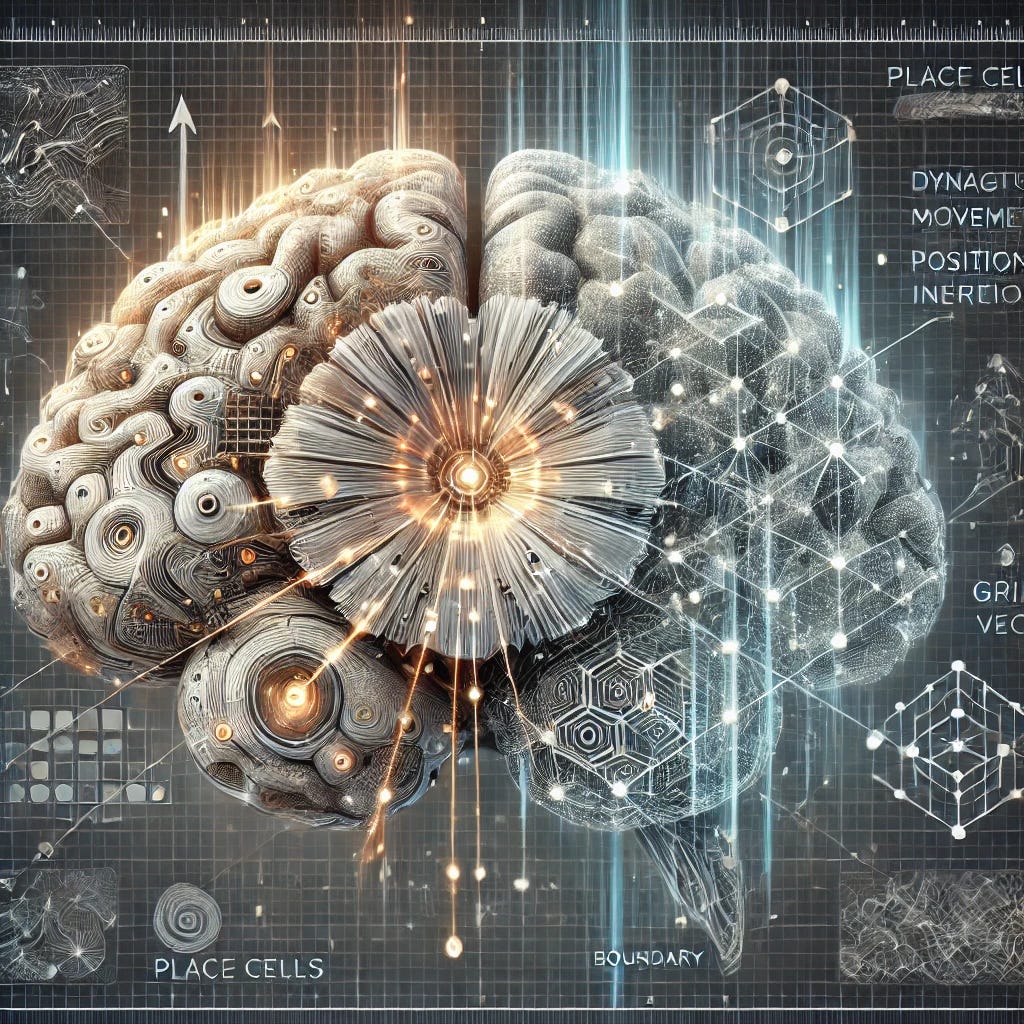

Recent neuroscience studies show that the brain uses different cortical streams to process motion-based depth (e.g., area MT/V5) and binocular disparity (e.g., V1/V2). In infants and blind individuals, the motion stream is often more developed, implying that motion-induced parallax may be more foundational than visual disparity.

In Drosophila, neural circuits in the lobula plate encode directional motion through a highly conserved pathway, suggesting early insects evolved internal mechanisms to simulate object displacement over time. These pathways show computational similarities to SLAM (Simultaneous Localization and Mapping) algorithms in autonomous robotics.

Furthermore, in mammals, the hippocampus, long associated with spatial memory, has cells (place cells, grid cells, boundary vector cells) that may derive their firing patterns not only from position but from movement-derived positional inference. In other words, our internal maps of the world may still rely on an ancient parallax engine.

4. Philosophical Implications: Is Dimensionality Felt Before It’s Understood?

If depth is first inferred from motion, not seen but felt, then our very concept of space may be rooted in embodied computation. This challenges the view that spatial reasoning is purely cortical or conceptual. Instead, it suggests that cognition evolved from continuous sensorimotor recalibration.

Philosophers like Merleau-Ponty emphasized the body as the locus of perception. Under the Parallax Instinct framework, depth and dimension are not abstract entities imposed on experience but emergent properties of dynamic discrepancy. We don’t just observe space, we resolve it.

5. Speculative Technological Extension

Could artificial intelligence be improved by embedding a synthetic parallax instinct? Imagine robotic systems that don’t merely process 3D maps but evolve depth intuition by comparing signal discrepancy during motion, the way slime molds, spiders, and planarians might.

Emerging neuromorphic hardware could mimic biological parallax pathways, not by stereo imaging, but by creating temporal offsets between sensory inputs. This could yield more energy-efficient, embodied AI that "learns" space through motion-induced discrepancy resolution.

6. Experimental Proposal

We propose an experiment using blindfolded human subjects in a controlled VR environment. Using auditory echolocation (clicks or pulses), participants would explore spatial layouts under varying parallax constraints. We would monitor performance accuracy in identifying distances, neural correlates via fMRI in motion-processing and spatial memory areas, and changes in performance when temporal discrepancy is artificially increased or decreased

If parallax is indeed a foundational spatial computation, participants should perform better when motion-generated discrepancies are preserved, even without visual cues.

7. New Section: Parallax and the Origins of Abstract Thought

The implications of the Parallax Instinct extend beyond spatial awareness into the very roots of abstract reasoning. Studies have shown that children develop depth inference before numerical or geometric abstraction, and the neural overlap between spatial cognition and mathematics suggests a shared developmental pathway (Dehaene et al., 1999).

Moreover, cultures with little exposure to formal schooling often develop rich spatial navigation abilities, sometimes outperforming Western counterparts (Haun et al., 2006). These findings hint that spatial logic, possibly rooted in parallax-based learning, may scaffold more complex conceptual abilities. In this light, the origin of symbolic thought, language structure, and logical sequencing could emerge from the capacity to resolve spatial differences dynamically over time.

If correct, this repositions parallax not as a vestigial trick of movement, but as the first computation, a bridge from embodied experience to symbolic abstraction.

The Parallax Instinct Hypothesis reframes depth perception not as a sensory supplement, but as a computational foundation for life’s grasp of space. From slime mold chemotaxis to human grid cells, spatial cognition may be less about where things are, and more about how things change as we move. Parallax, in this view, is not just a trick of the eyes, but a seed of the mind.

References

Dehaene, S., Spelke, E., Pinel, P., Stanescu, R., & Tsivkin, S. (1999). Sources of mathematical thinking: behavioral and brain-imaging evidence. Science, 284(5416), 970–974.

Haun, D. B., Rapold, C. J., Janzen, G., & Levinson, S. C. (2006). Plasticity of human spatial cognition: Spatial language and cognition covary across cultures. Current Biology, 16(14), 1414–1418.

Muller, L., Chavane, F., Reynolds, J., & Sejnowski, T. J. (2018). Cortical travelling waves: mechanisms and computational principles. Nature Reviews Neuroscience, 19(5), 255–268.

Investigating the Parallax Instinct Hypothesis: From Embodied Theory to Experimental Validation

1. Introduction: The Problem of Spatial Cognition Beyond Vision

Depth perception has traditionally been explained through the mechanism of binocular disparity, where two eyes provide slightly offset views that the brain combines to calculate spatial distance. While effective for many species, this explanation fails to account for the accurate spatial navigation observed in monocular, low-resolution, or even non-visual organisms (e.g., planarians, spiders, electric fish).

The core problem is this:

How can an organism or artificial agent infer spatial relationships — such as depth or distance — without stereo vision or detailed visual input?

Understanding this not only informs evolutionary biology but has profound implications for the design of adaptive, energy-efficient artificial intelligence systems.

⸻

2. The Parallax Instinct Hypothesis

We propose that movement-induced discrepancy — parallax — is not simply a workaround for the lack of binocular vision, but a foundational computational mechanism for spatial inference. In early life forms, this may have emerged through the perception of shifting gradients (chemical, electrical, thermal) over time as organisms moved.

This motion-based comparative learning evolved into more sophisticated spatial logic, eventually forming the basis for:

• Spatial navigation in simple creatures

• Grid cell activation in mammals

• Symbolic abstraction (geometry, number) in humans

• Potential architectural principles for AI

We call this the Parallax Instinct — an innate capacity to resolve dimensionality through the experience of motion and discrepancy over time.

⸻

3. Objectives

The study aims to:

1. Theoretically explore the biological plausibility of the parallax instinct.

2. Develop a method to test whether organisms (or AI agents) can infer space purely through movement-based discrepancy.

3. Design and execute experiments — first without, then with robots — to simulate and evaluate parallax-driven spatial learning.

⸻

4. Experimental Framework

4.1 Non-Robotic Test: Human Spatial Inference in the Absence of Vision

Objective:

Test whether humans can infer spatial layouts using motion-based auditory parallax in the absence of visual cues.

Setup:

• Participants wear VR headsets and are blindfolded.

• They explore a virtual room using echolocation-like sounds (e.g., clicks or pulses) triggered by movement.

• Environments are designed with objects at varying distances and positions.

• Control group hears only static, global sounds (no parallax cue).

• Experimental group hears directionally offset sounds that change with head or body movement, simulating auditory parallax.

Measurements:

• Accuracy in recalling object placement

• Efficiency in navigation tasks (e.g., locating a target)

• fMRI or EEG activation in spatial reasoning and motion-processing brain regions (e.g., MT/V5, hippocampus)

Hypothesis:

Participants using motion-induced auditory discrepancy will demonstrate more accurate spatial understanding, supporting the theory that parallax-based computation underlies depth and layout inference.

⸻

4.2 Robotic Test: Embodied AI Agent with Parallax-Based Learning

Objective:

Determine if a robot can learn spatial relationships using motion-induced discrepancy, without stereo vision or high-resolution imaging.

Phase 1 – Simulation in Unity:

• Build a simple 3D environment with obstacles and goals.

• Create a virtual agent equipped with a single low-resolution sensor (simulated LIDAR, sonar, or grayscale camera).

• Allow the agent to move and compare sensory input across time to infer spatial layout (simulate parallax).

• Reward learning outcomes based on successful navigation, object avoidance, or spatial recall.

Phase 2 – Real-world Prototype Using ROS:

• Construct a small wheeled robot (e.g., Raspberry Pi + camera or rangefinder).

• Program it in ROS to:

• Move autonomously in a test environment

• Log sensor input over time

• Learn depth/distance using movement-induced signal shift

• Compare with a stereo-vision model to evaluate performance differences.

Measurements:

• Time to complete spatial navigation task

• Accuracy in reconstructing environmental layout

• Energy efficiency and processing load

Hypothesis:

The parallax-driven robot will successfully infer spatial structure and navigate the environment using temporal discrepancy alone, proving that spatial computation can emerge without stereo vision.

⸻

5. Broader Implications

If supported, this hypothesis could reshape how we:

• Understand the evolution of cognition (from embodied movement to abstraction)

• Teach and rehabilitate spatial reasoning in blind individuals

• Design adaptive AI for unpredictable or data-sparse environments

• Build energy-efficient robots that learn from movement rather than massive pretraining datasets

This would position parallax not as a visual trick, but as the ancestral root of thinking in space — and potentially, of thinking itself.

⸻

6. Next Steps

• Develop funding proposals for pilot studies (psychology, robotics, and neuroscience departments)

• Seek partnerships with cognitive science labs or robotics groups already working with Unity or ROS

• Publish preliminary results in open-access journals to encourage interdisciplinary feedback

Thank you again for sharing your Parallax Instinct Hypothesis. I found the idea deeply thought-provoking, especially in terms of how it might influence the design of artificial intelligence.

One point that really stood out to me is how this theory could allow AI systems to learn about their environment not through pre-loaded maps or static images, but through movement-based learning. Just like animals or infants, an AI could use parallax — the shifting of objects relative to motion — to gradually build an internal map of its surroundings.

This approach seems like it could make AI:

• More adaptive to new environments

• Less dependent on huge datasets

• More efficient, especially in low-power or low-resolution conditions

It also opens up the possibility of designing AI that doesn’t just see space but begins to understand it — through a kind of primitive, movement-based awareness. That idea really stayed with me.

I’m not in a position to help with developing the theory, but I wanted to let you know how much I appreciated the clarity and originality of your work — and how it helped me see both evolution and AI in a new light.