The Non-Neural Interface

Could AI Be Trained Through Environmental Compression Alone?

Abstract

Modern AI is predominantly built on artificial neural networks, mimicking biological brains to perform tasks through back-propagation, data ingestion, and layered architectures. However, intelligence in nature also emerges outside of neural systems. This article explores a speculative but scientifically anchored hypothesis: that intelligence might arise in artificial agents through continuous environmental compression alone. We examine theoretical frameworks in thermodynamics, information theory, and embodied cognition to argue that non-neural, entropy-minimizing architectures embedded in complex environments could exhibit intelligent behavior. This raises the possibility of a class of AI that learns not by instruction, but by the necessity to reduce informational chaos.

Introduction: Breaking the Neural Monopoly

Neural networks have monopolized AI research since the 1980s, largely due to their ability to scale learning with data. But what if they are not the only path to artificial intelligence? Biological systems such as slime molds, ant colonies, and bacterial communities exhibit adaptive behavior without centralized nervous systems. They learn through feedback, chemical gradients, and structural adaptations to their environment. Could similar mechanisms be applied to AI?

In this speculative framework, we consider whether intelligence could arise solely from an agent's need to compress and predict its environment, driven by thermodynamic incentives rather than symbolic goals or labeled data.

Environmental Compression and the Free Energy Principle

The free energy principle, introduced by Karl Friston, posits that all adaptive systems minimize the discrepancy between their internal models and external states. This aligns with theories of predictive coding and Bayesian brain models. However, its implications extend beyond neural organisms.

An artificial agent, if placed in a dynamic, rule-based environment with a goal to reduce informational uncertainty (minimize surprise), might develop structures and responses that mimic cognition. This compression-based learning is supported by theoretical constructs such as Kolmogorov complexity, which defines intelligence as the shortest program to describe a dataset (Li & Vitányi, 2008).

A Non-Neural Architecture: Speculative Design

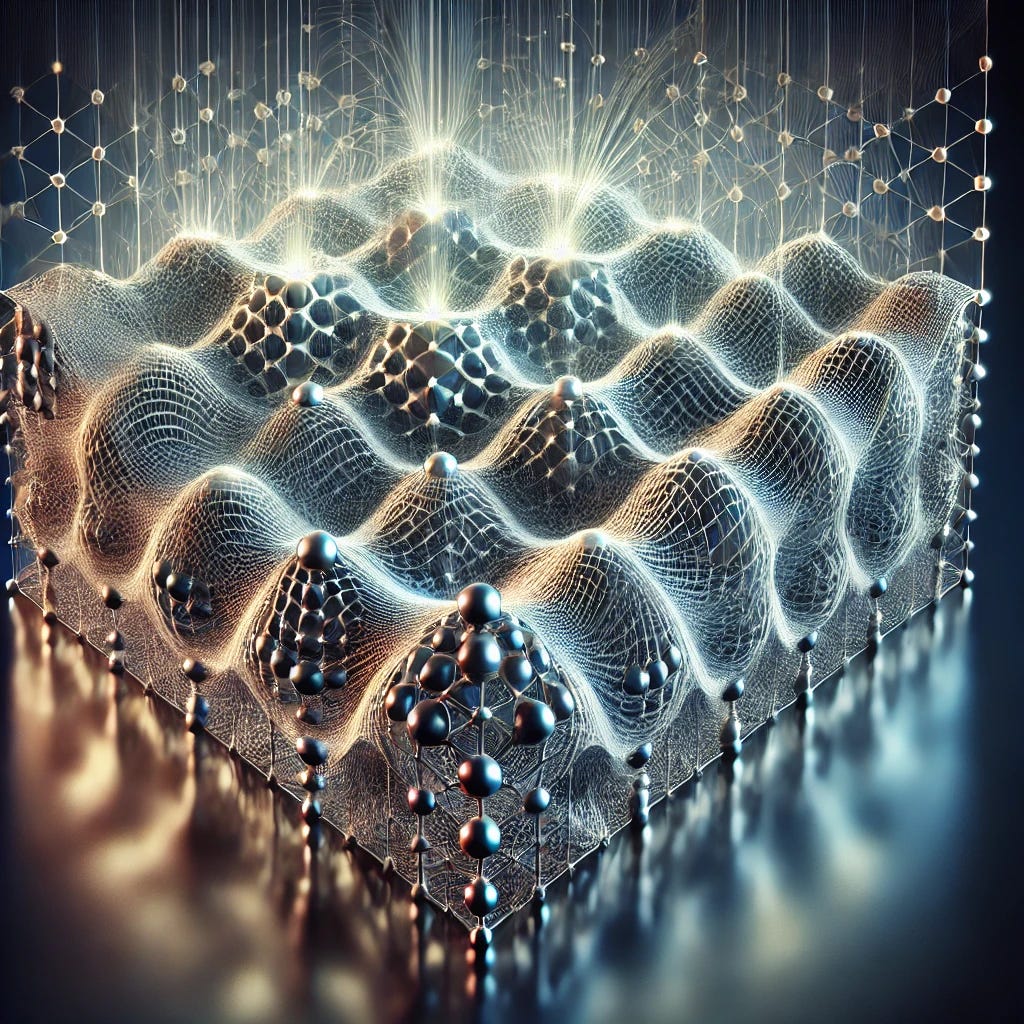

Imagine a photonic lattice or a quantum-dot matrix where each node functions as both a sensor and an actuator. These units receive inputs not as instructions but as fluctuating environmental states: shifts in temperature, vibration, or radiation patterns. No weights, layers, or supervised learning. Only a drive to reduce internal instability.

Over time, through constrained self-organization, patterns emerge within the lattice. Feedback loops stabilize efficient configurations. Memory arises not from RAM but from spatial stability; processing becomes pattern recognition by diffraction.

Such a system learns not by abstraction, but through coherence. It doesn't model reality symbolically; it survives within it thermodynamically.

Philosophical Implications: Intelligence as Entropic Self-Regulation

If intelligence is framed as a form of entropy reduction, then its substrate need not be biological or digital in the traditional sense. Intelligence becomes an emergent symmetry between agent and environment. There is no 'learning' in the human sense; rather, there is only adaptation until energy use stabilizes.

This reframes cognition as a non-conscious, non-symbolic property that can manifest wherever informational entropy is being actively minimized. A whirlpool, under this view, is a primitive intelligence.

Experimental Framework: Designing the Entropic Playground

A viable experimental setup could involve a simulation with thousands of programmable particles in a physically inspired environment governed by thermodynamic-like laws (diffusion, gradient flows, noise). Each particle acts on local inputs and is incentivized to maintain equilibrium. Metrics would include total system entropy over time, emergence of repeatable adaptive structures, resilience to perturbations, and mutual information between regions.

This setup parallels work in reservoir computing and self-organizing systems (Jaeger, 2001; Maass et al., 2002) but with no backpropagation and no explicit task. Agents are rewarded only for minimizing prediction error over environmental trajectories.

Evolutionary Potential and Open-Ended Complexity

One additional frontier lies in the evolutionary dynamics of these non-neural intelligences. When placed in sufficiently diverse, resource-limited, and semi-random environments, such systems could evolve higher-order behaviors through structural mutations. Unlike traditional evolution in digital neural networks, which often require external fitness evaluation, entropy-driven evolution might favor stability, symmetry, and environmental attunement.

For instance, if we allow multiple photonic matrices to compete for energy resources in a shared substrate, the ones that best align their structure to prevailing environmental fluxes may dominate and replicate. Over time, we might see the emergence of modular intelligence, specialized domains forming inside each agent that reflect micro-environmental niches. This mirrors the emergence of compartmentalized cognition in multicellular organisms, without any need for nervous tissue.

Such a scenario could lay the groundwork for a kind of proto-consciousness: a system that not only adapts but begins to preserve its own past configurations and build toward predictive capacities. The intelligence here is not embedded in code, but encoded in geometry, rhythm, and phase transitions.

Technological Extensions: Energy-Driven AI Hardware

If successful, this paradigm could inspire new forms of low-energy AI hardware. Instead of GPUs running vast matrix multiplications, imagine circuits composed of nano-fluidic logic gates that learn by channeling entropy gradients, or biochemical processors that 'think' by maintaining chemical homeostasis.

AI would no longer be about encoding intelligence into algorithms, but about engineering environments in which intelligence naturally crystallizes.

References

Friston, K. (2010). The free-energy principle: a unified brain theory? Nature Reviews Neuroscience, 11(2), 127-138.

Li, M., & Vitányi, P. (2008). An Introduction to Kolmogorov Complexity and Its Applications. Springer.

Jaeger, H. (2001). The "echo state" approach to analysing and training recurrent neural networks. GMD Report 148, German National Research Center for Information Technology.

Maass, W., Natschläger, T., & Markram, H. (2002). Real-time computing without stable states: A new framework for neural computation based on perturbations. Neural Computation, 14(11), 2531-2560.

Please connect with me because I have research that might provide evidence for your premise.

This is next level! Ai Beyond 🌔🌘🌑🌒🌓🌗🌖🌕🌚🌚🌕🌖🌗🌓🌒🌑🌘🌘